Levelling with the tech giants

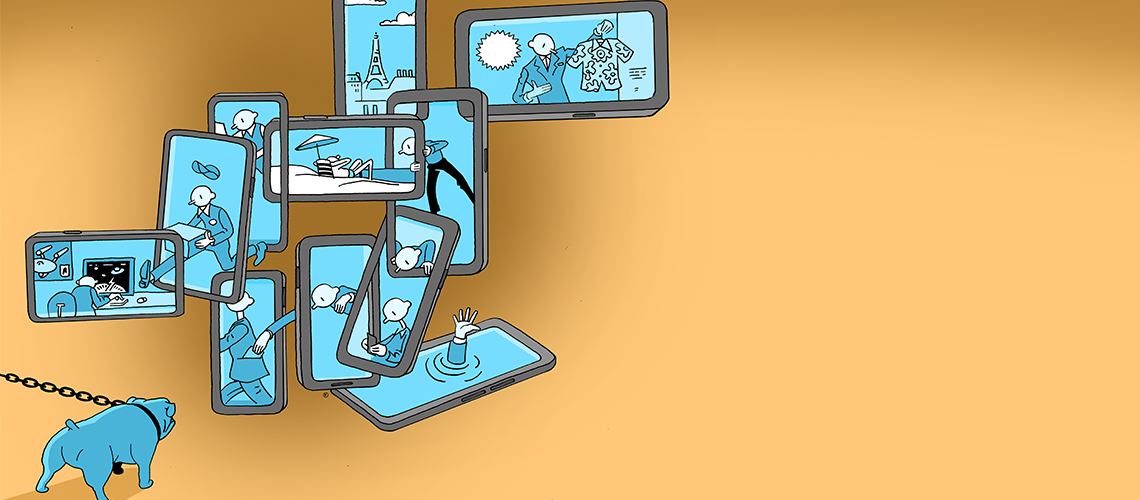

Image caption: Illustration by: thilo-rothacker.com

The scale at which the tech giants operate – and their significant presence in our lives – makes holding them to account a challenge. Global Insight investigates signs that governments, activists, unions and courts – and even tech companies themselves – are, however, beginning to do so.

After years of Big Tech domination, there are small signs that the world is levelling with these digital giants. Perhaps most obviously, there’s been greater governmental regulatory intervention. In September 2023, the EU identified the 22 ‘gatekeeper’ services run by the major tech companies that will face stringent new rules as part of its Digital Markets Act (DMA). In the autumn of 2022, Meta also accepted an order by the UK competition watchdog, the Competition and Markets Authority (CMA), to sell animated images platform Giphy.

The ‘differing ecosystems’ – the various social media sites and services provided by Big Tech players – ‘have become so omnipresent, there’s almost a sense that people can’t live without them’, says Ben Lasserson, a competition partner in Mishcon de Reya’s Innovation department. But he doesn’t believe the likes of Meta or Google are invulnerable. ‘We’re at a sort of inflection point’, he says, comparing the debates concerning the technology sector to those that led to the break-up of the 19th century US railroads, ‘also deemed too big to fail at the time’.

The green shoots of competition law

Competition law is one way of creating checks and balances. ‘Each of the Big Tech giants is essentially a monopolist in their own ecosystem’, says Lasserson. ‘For businesses operating in the digital space – think app developers for mobile devices, manufacturers selling on Amazon or businesses wanting to use Google or Meta to reach customers through online advertising – there is the ever-present risk that the Big Tech company in question might suddenly, and arbitrarily, change the rules on them.’ Coupled with this, he adds, ‘a lot of the Big Tech services appear to be free, when, in fact, what they’re doing is tracking an individual’s digital and online activity in order to sell that data profile in the context of targeted advertising’.

Lasserson highlights ‘an almost widespread resignation’ that these are simply the costs of having access to such services. But he believes ‘that the tide is starting to turn on that front’. He points to the Court of Justice of the European Union’s (CJEU) landmark judgment in Meta Platforms and Others v Bundeskartellamt, in July 2023, as a possible sign. The CJEU confirmed that an abuse of dominant position in digital markets can be found by a national competition authority where there’s a breach of the EU General Data Protection Regulation (GDPR) – that this information gives a company an unfair competitive advantage. ‘The European courts started to look at Meta’s actions and expressly flagged the idea that a breach of data protection law can be considered in the context of a breach of competition law’, explains Lasserson.

Each of the Big Tech giants is essentially a monopolist in their own ecosystem

Ben Lasserson

Partner, Mishcon de Reya

The CMA’s ‘quite bold position’ in requiring that Meta sell Giphy – following their merger – is another potential portent of future thinking, he says. ‘In contrast to many other regulators around the world, [the CMA] stuck its neck out and said, “no, we’re going to work off a new approach to thinking about competition law.”’

The CMA’s revised Merger Assessment Guidelines from 2021 define this new approach – the theory of ‘dynamic competition’ – as applying when a company’s entry or expansion into a market is based on ‘the opportunity to win new sales and profits, which may in part be “stolen” from the other merger [company]’, Lasserson explains. It says we ‘can’t just look at static market shares’, but rather, ‘we need to think about how markets develop and how parties operate in the digital space’. Using this assessment, the CMA forced Meta to sell Giphy.

However, while Lasserson observes ‘a willingness by the CMA to approach things with a slightly broader view’, he strikes a note of caution, and says ‘it’s too early to tell whether that’s now going to be the norm’. This is echoed by other commentators: we shouldn’t assume that the green shoots of new approaches to assessing the practices of digital sector heavyweights will quickly turn into forests of new legislation and regulatory enforcement, particularly when these companies have become such powerfully pervasive, multi-sector lynchpins of our daily life.

The extent to which regulatory authorities can enjoin Big Tech to alter their practices either through fines or enforcement also varies between jurisdictions. In Japan, for instance, the data protection authority isn’t ‘enthusiastic about investigating or sanctioning social media companies’ right now, says Takashi Nakazaki, former Chair of the IBA Technology Disputes Subcommittee and a partner in Tokyo-based firm Anderson Mori & Tomotsune’s Intellectual Property and Technology practice group. He says that the Japanese government is watching what other countries are doing and adds that, from a competition law perspective, it has recently begun to implement new legislation focused on data protection and social media platforms, such as the Act on Improving Transparency and Fairness of Digital Platforms, which came into force in 2021.

Angela Flannery is Vice-Chair of the IBA Communications Law Committee and a telecoms, media and technology partner at specialist Australian regulatory firm Quay Law Partners. She’s sceptical about the speed with which protections for Australian users and businesses from the impact of Big Tech in the digital sector are being implemented. In 2019, the Australian Competition and Consumer Commission (ACCC) released its report from ‘what everyone calls a “groundbreaking inquiry” into digital platforms’, she says. It acknowledged that, ‘yes, Google Maps and some other platform services are great’, but also that these platforms were ‘creating enormous problems for the Australian economy – in terms of data practices but also taking advertising revenue from traditional media’. However, five years later, of the ACCC’s numerous recommendations, only a few have been implemented by the Australian government.

Flannery highlights ‘the hard slog’ the government faced in implementing just one of the recommendations: the News Media Bargaining Code (2021), which forced Google and Facebook to the table to negotiate payment with Australian media companies for their content. ‘The then government was battered and beaten by the platforms in pushing that reform’, she says. The legislation creating the Code passed in early 2021, but not before Facebook briefly blocked all news links for Australian users. She suspects the cautiousness she’s detecting in the current government’s slow progress in taking forward the ACCC’s other suggestions is because it wants to avoid such a situation happening again. ‘Largely, the [recommendations] relating to consumer and competition harms haven’t been implemented’, Flannery explains.

Neither Google nor Meta responded to Global Insight’s requests for comment. ByteDance and Amazon were also contacted for this article, but did not respond.

Seeing Big Tech in court

Where it can feel as if governments are dragging their feet, one means of holding technology companies accountable is private litigation by individuals, activists and law firms. This can be an uncertain and often costly route, but it thrusts technology companies into the unwelcome glare of the public spotlight. For example, in late December, Google agreed to settle a $5bn privacy lawsuit alleging it had spied on people using the ‘incognito’ mode on its Chrome browser. The settlement’s terms are as yet undisclosed, and Google didn’t respond to Global Insight’s request for comment.

But bringing a lawsuit against a technology company can be made challenging by the varying levels of protection they’re afforded by different legal systems. Nakazaki says that, currently, there’s no class action system in Japan to bring privacy-related actions. Meanwhile, in the US, for the purpose of most civil claims, Section 230 of the Communications Decency Act codifies a broad principle that online services aren’t considered the speakers or publishers of material posted by their users. In recent years, though, says Luke Platzer, a litigator at Jenner & Block’s Communications, Internet and Technology practice in Washington, DC, ‘a lot of creative energy has gone into trying to overcome the Section 230 defence through creative framing of plaintiffs’ claims’. This often takes the form of ‘trying to predicate liability on online design choices’, in terms of how content is displayed or promoted.

AM v Omegle.com settled before court, but it came close to trial and the site’s founder subsequently shut down the site. In a farewell note, Omegle’s founder said that while the platform ‘punched above its weight in content moderation […] the stress and expense of this fight [against misuse] – coupled with the existing stress and expense of operating Omegle […] are simply too much’.

A landmark example is the 2023 case AM v Omegle.com, in which the plaintiff’s lawyers sought to circumvent the ‘speaker or publisher’ issue by instead bringing a product liability suit against online chat site Omegle. They alleged that the site’s design was defective because the type of user-to-user harm their client suffered – online grooming – was foreseeable. Product liability cases have become a trend in the past year or so, with other platforms facing similar suits.

Public lawyer Rosa Curling is a director at Foxglove, a UK-based group that fights for ‘tech justice’. She believes that taking the tech sector heavyweights to court is currently the best way to force them to follow the rule of law, ‘because they have so many resources, they genuinely feel like they’re above it’. Since 2019, Foxglove has been a thorn in the side of Big Tech, challenging its practices and alleged lack of transparency.

While other campaigners have pursued various routes, which Curling supports, Foxglove felt that ‘what was missing was challenging [tech companies’] power from a labour rights angle’. A flashpoint for these concerns was the firing of Daniel Motaung – a South African Facebook moderator who was let go from Sama, an outsourcing company used at the time by Meta, in 2019. He subsequently sued Meta and Sama for post-traumatic stress disorder caused by exposure to distressing material, claiming no adequate support, as well as unfair dismissal connected to his unionising efforts to create better working conditions for himself and his colleagues. Foxglove brought the case in partnership with Kenyan law firm Nzili & Sumbi Advocates.

In February 2023, in a decision seen as an accountability milestone by campaigners, Kenya’s employment and labour relations court ruled that Meta was a ‘proper party’ to the litigation and allowed the case to proceed. Meta said it would appeal.

The reality, says Curling, is that content moderators are key to the company’s search engine optimisation policy. ‘And their entire working conditions are set by software designed by Meta’, she says. The case is working its way through the courts. Meta didn’t respond to a request for comment from Global Insight but has stated that it requires partners ‘to provide industry-leading pay, benefits, and support’.

It takes two to three years to get through the courts – by which time, the company has moved on to some other form of egregious conduct

Angela Flannery

Vice-Chair, IBA Communications Law Committee

Sama meanwhile declined to comment on the case as it's ongoing. However, in a statement on its website, it explains that the company ended its work in content moderation in March 2023. It says it had ‘paid employees wages that are consistently three times the minimum wage and two times the living wage in Kenya’, with an average 2.2 times increase during 2022, and that employees receive healthcare and other benefits, as well as mandatory wellness breaks and one-to-one counselling. Going forward, Sama will be conducting audits – including by third parties – relating to pay, wellness and working conditions.

The attention generated by a case like Motaung’s can have ripple effects. May 2023 saw the formation of the first Africa Content Moderators Union. Such unions have an ‘incredibly important role to play’ in re-writing the power relationship between the Big Tech companies and those responsible for moderating their content, says Curling. ‘The people who are doing this work are crucial to companies like Meta’, she says. ‘Imagine if they all went on strike for a week.’

The cutting-edge nature of the tech industry is giving rise to a range of other employment disputes, too. Michael Newman, a partner at Leigh Day in the UK, specialises in bringing discrimination claims for individuals and groups. ‘Some of the issues at the interface of employment and Big Tech are unique’, he says, including the companies’ use of automated learning and artificial intelligence to ‘trim their workforce’. While using data collection and productivity metrics to rate performance can, ‘in some cases, be valid’, he warns against their ‘allure of objectivity’. As there’s ‘often not an opportunity for a human to review it, or it’s not looking at the information behind the data’, Newman says, ‘there’s room for error to creep in’.

He uses toilet breaks as an example. ‘Are you going more often because you are disabled, for example, or because you’re pregnant? Those are things that numbers can’t always capture and may give rise to an employment issue’, explains Newman. There have been instances where ‘individuals have been made redundant and the company hasn’t been able to explain how that decision has been reached, only that an algorithm came up with the answer’. And online tools used to rank job applications are often based on previous hiring decisions. ‘So, if you have a majority male workforce – and the tech industry is known to have a gender bias in terms of who already works in it – the system will replicate that.’

As a means of creating specific, useful change in a company’s technological practices, commentators have mixed views about litigation’s effectiveness, with some considering it too piecemeal or reactive. For Angela Flannery, it can be ‘too little, too late’, only tackling ‘specific conduct at a particular point’, she says. ‘It takes two to three years to get through the courts – by which time, the company has moved on to some other form of egregious conduct.’

Platzer at Jenner & Block says that while the ‘scope of immunity’ provided by Section 230 in the US has ‘drawn a fair amount of focus’, critics of its breadth ‘are often animated by very different objectives’. He sees a lack of agreement or common vision as a challenge to efforts at legislative change.

‘Disinfectant of sunlight’

The rise in private claims is symptomatic of frustration in campaigners and other industry players, who feel that relying on governments or the tech giants themselves to challenge their practices and to develop, implement and stick to new codes of conduct across the full extent of their businesses isn’t producing near enough industry change. ‘We felt like the power of these big companies was going unchecked’, says Curling, in discussing what motivates Foxglove. ‘They were doing a lot of “internal reflection”’, she says wryly, but ‘bluntly, no one was suing them’.

One development in the past few years in this regard has been Meta Chief Executive Officer Mark Zuckerberg’s decision to establish an arms-length Oversight Board. Beginning with 20 founding members from diverse backgrounds, the Board – which is modelled on the US judicial system, with its own charter – has negotiation and mediation powers and its decisions have precedential value. Since 2020, the Board has reviewed user appeals, Facebook policies and content moderations and overturned some decisions. To ensure the Board’s independence, an irrevocable trust with $130m in in initial funding was established, which covers its operational costs for over five years.

Self-regulation is one piece of an important set of collaborative actors

Paolo Carozza

Member, Meta Oversight Board

Board member Paolo Carozza is Professor of Law and Concurrent Professor of Political Science at the University of Notre Dame. He has a career-long background in human rights. ‘None of us are here to be apologists for Meta’, he says. ‘And we already have a track record: we’ve had an impact on increasing its transparency and changing some of its policies in terms of what’s left up or taken down.’ For example, Meta’s Facebook platform now has to say as to whether content was removed on the basis of automated processes or due to human review. This has also shed light – via the freedom of information requests it has enabled – on when post-removal requests have come from state actors or governments. Befitting his background, Carozza is keen on this ‘disinfectant of sunlight’.

But there’s considerable scepticism over the effectiveness of industry self-regulation in any form. Mishcon de Reya’s Lasserson believes that ‘given the nature of some of the more “questionable” business practices that have come to light [within the industry] in recent years, self-regulation is probably regarded as being a bit unrealistic’.

Carozza acknowledges this scepticism, saying that he and the other members of the Oversight Board know that it’s ‘still an experiment. Nothing like this has ever been done before’. But he flags what he says they’ve already achieved when it comes to transparency. ‘We hope it’ll be successful in the long run. We think it’s important that it should be a model for what happens in other places.’ And he adds that he doesn’t believe that anyone on the Oversight Board would ever say that ‘self-regulation is sufficient. We’d acknowledge – and insist on – that it was one piece of an important set of collaborative actors’.

From the insight into Meta he’s gained from being on the Oversight Board, Carozza believes that ‘individual litigants and cases simply aren’t adequate tools, by themselves’, to address systemic issues. ‘I hope to see a relationship between regulators, the company and independent sources of accountability, like the Oversight Board, where everyone recognises that we all have roles to play that are indispensable’, he says.

Truly holding Big Tech accountable for its actions feels analogous to the parable of the blind men and the elephant – where six men learn what an elephant is, but only to the extent that each is able to touch separate parts of the animal. ‘Inevitably, it’s going to have to be a multi-faceted approach’, says Michael Newman at Leigh Day. But whether the various parties – from governments and regulatory bodies to campaigners and litigants, to the companies themselves – can unite their differing views into a coherent, workable whole is unclear.

Still, changes are coming. The EU’s implementation of the DMA and the Digital Services Act (DSA) is possibly game changing. In September, the EU identified 22 so-called ‘gatekeeper’ services run by industry heavyweights Alphabet (owner of Google), Amazon, ByteDance (owner of TikTok), Meta and Microsoft. Each will have six months to demonstrate they are fully in compliance with the DMA’s obligations and prohibitions.

The DSA, meanwhile, is being closely watched and – in many cases – imitated ‘by regulators around the world’, says Carozza. ‘It provides for a variety of disclosure and transparency requirements that would be standardised across the major platforms.’

Tom Wicker is a freelance journalist and can be contacted at tomw@tomwicker.org